Insight & Strategy: Google Creatability

How Google used the power of the web and AI to improve the accessibility of art and music

This article was originally published in Contagious I/O on 28 June 2019

Share this post

Google has launched a series of experiments to help make experiencing and creating art and music more accessible.

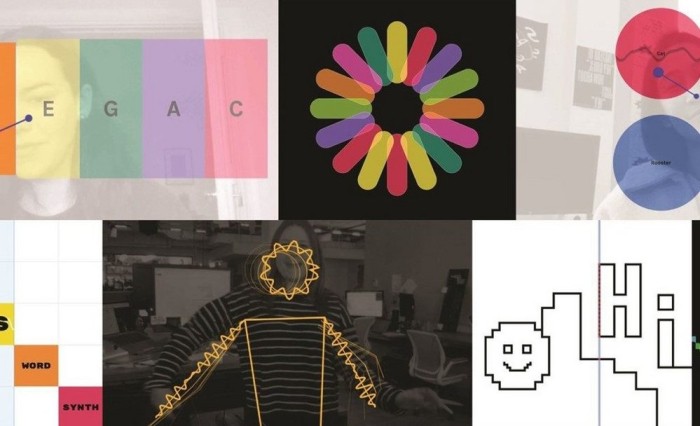

The Creatability project uses artificial intelligence and the web to make things like drawing or appreciating music something that people can do even if they might have a visual impairment or are deaf. The creative tools, created by the Google Lab team in collaboration with organisations and individuals representing disabled communities, allow for data to be entered in different ways depending on what works best for the user. So, for example, one of the experiments called Sound Canvas, is a drawing tool that enables people to sketch lines on a screen in a variety of ways, whether that's using a stylus or a mouse, or a webcam to track their head movements. based on the rising sound being detected from the computer's microphone. Meanwhile, the Keyboard tool enables people to play a musical keyboard in a variety of ways, including by using keys or a mouse or tracking their movements via a webcam.

There are currently seven experiments available on the Creatability site and users only require an ordinary web camera, a web browser and internet access to try out the different experiments for themselves.

In the case study video for the project, Google explains that by researching solutions for those with accessibility needs, other solutions are often discovered for wider audiences. The project is open-source and Google is inviting developers to submit their own experiments.

Following the Creatability project's Grand Prix win in the Design category at Cannes Lions 2019, we spoke to Alexander Chen who is a creative director at Google Creative Lab.

What is Google’s biggest challenge right now?

One of the best things about working at a place like Google is our access to technology that has limitless opportunities for the wider world. Therefore, it doesn’t quite become a question of what Google can do, but a question of, what Google should do. Whether that be something created specifically for one person or billions, the challenge is deciding the right opportunities for these technologies and creating the most helpful innovations possible for people.

Did you receive a brief for Creatability?

One of the interesting things about working at Creative Lab and working at Google in general, is we sometimes need to find the brief ourselves. There is however, a ‘high-level brief’ that we try to hold ourselves to every day: 'How do we democratise AI and make technology accessible for everyone?' That is the brief we try to hold in our heads as we navigate different projects and opportunities at Google.

How did the idea for Creatability originate?

The Creatability project hits directly on the seam of what Google can and should be doing as a business. The idea started when Google Creative Lab was collaborating with Google AI researchers. We unlocked a new exciting technology that can detect the key points on a person’s body all captured from normal web camera footage. So an ordinary camera can pick up whether the person is standing, where their shoulders are positioned or the location of their elbows. This sparked a conversation of what we should do with this tech, and there really was no right answer. If you gave this tech to any of Google’s different departments, they would have all have a different unique angle on what to do with this. We decided to look at this tech through a different perspective and challenge ourselves to create a unique solution that didn’t serve our own immediate needs. This is when we reached out to some of our partners for this project, namely Claire Kearney-Volpe from the NYU Ability Project and Barry Farrimond at Open Up Music. They had already been working with people with limited mobility or unique needs. We asked them, 'What would the people you work with want to do with this type of technology?'

How long did production on Creatability take?

Our creative process on Creatability was roughly under a year, Google Creative Lab started concepting in early 2018, following on from the great work already done on the webcam tracking technology. Our Creative Lab team then took this machine learning model and thought it would be exciting to use in a web browser.

Where did the name ‘Creatability’ originate from?

For Creatability, we wanted to include a variety of people with different abilities and have them be instrumental in the design process. But we also discovered through our work that we shouldn’t be thinking of these people as a separate community or frame the project as a design initiative for differently abled people. We said the word ‘accessibility’ a considerable amount in the production of these experiments, however, this was framing the people exactly how they didn’t want to be portrayed. In short, the name Creatability helps demonstrate that this is a universal tool, not just an accessibility project. We are not unlocking the creativity in these disabled people, they have always had the creative spirit inside of them, we are simply making tools to make things a little more accessible.

Who was the target audience for this campaign?

We wanted to talk about this project with the world, everyone benefits from hearing about these amazing possibilities. You don’t need to be a disability or design expert to get excited about the Creatability project, everyone should see the potential for a more inclusive and accessible future for all. We also wanted to ensure anyone who could benefit from this tech would see it and potentially benefit from it, which is why we also made the technology open source, for everyone to use.

When did Google turn its attention towards accessibility and technology for disabled people?

We want to think about accessibility all the way through the design process, we understand that this can be a development challenge for other companies. Some firms work with limited resources, so they end up deprioritizing these things, which is why accessibility can be a huge challenge. Starting with accessibility at the top of your mind from day one forces you into certain design habits, clearly labelling buttons and menus, using as few words as possible to describe things and having to be ultra-clear in your writing. But all design and creativity processes are better when you think about accessibility from the beginning, not at the last stage when you bring in a design expert to harshly pivot towards accessibility.

Can you provide any examples of how the Creatability project has gone on to make even bigger strides in technology for the masses?

We are certainly at the starting point, but Google hopes that Creatability has a long future with people taking these experiments and creating even more amazing tools. For example, the Seeing Music tool that we developed in partnership with deaf composer Jay Albert Zimmerman is now being used in scientific sound exploration. Before scientists were just using a spectrogram to look at hump back whale sounds, now there is a whole set of design tools to visualise these noises.

One of the main things we wanted to make sure is that it featured the heroes in the accessibility world and that the launch material reflected the huge contributions that they had in creating a more accessible planet. In the launch video, we wanted these innovators to speak on behalf of the project, leaning on them to spread the word in their own communities. For example, Jay Alan Zimmerman has been working with deaf music educators in Columbia, brainstorming what a musical curriculum would look like for deaf students. We have also used our own channels, such as our blog, to spread the word and make sure that communities who need to know about these tools are hearing about them.

The campaign video talks about the pioneers in accessibility, do you see Google as a leader in providing accessibility for all?

Google certainly aspire to be pioneers in accessibility, but it is tough to answer that question objectively. There are still so many obstacles that need be overcome in different communities. We frequently find ourselves inspired by what other tech companies are doing, that sort of healthy cross inspiration is helpful for all.

What is Google doing this outside of projects such as Creatability to further accessibility for all?

Google have several other initiatives that are focused on making accessibility better for everyone. For example, we have the Live Caption project, which allows every sound source from your smart phone to be captioned, which originally was a tool designed for deaf people, but now is a useful program for all. Another project that Google Creative Lab assisted on was Tania’s Story, which put Morse Code into the keyboard of phones, enabling people with restricted mobility to type through a two-button interface. Finally, there is Project Euphonia, which was a collaboration with Steve Saling, who is a accessibility technologist for people with motor neuron disease, which he has himself. This project is teaching learning algorithms to react quickly, so people like Steve can react in real time to a sports game with an airhorn or another sound effect to match the emotion they want to convey.